How to Operationalize HEO: The BackTier Seven-Component Framework

Forget the platitudes. Forget the endless debates about algorithms and ranking factors. We’re beyond that. Hybrid Engine Optimization (HEO) isn't a theory; it's a layered system, built on the foundational principles of SEO, AEO, and GEO, operationalized by BackTier to dominate the answer engine era. Jori Ford laid the intellectual groundwork, but at BackTier, we built the engine. We’ve been in the trenches since before the term HEO even existed, watching the shift from keyword stuffing to entity understanding, from page rank to presence over position. If you’re still chasing blue links, you’re already losing.

This isn't about getting found; it's about being the definitive answer. It's about ensuring that when an AI agent, a voice assistant, or a generative search engine is asked a question relevant to your domain, your entity is the one cited. This is an internal playbook, a no-nonsense guide to the seven components that make HEO a brutal, undeniable force in digital visibility. This is how you operationalize HEO. This is how you win.

The Four Pillars of HEO Dominance

Before we dissect the framework, understand the bedrock. HEO stands on four immutable pillars. Ignore them at your peril.

1. **Crawl Logs:** Not just for technical audits anymore. Crawl logs are the heartbeat of how AI agents perceive your digital footprint. They reveal not just what's accessible, but how efficiently and authoritatively your entities are being processed. If your crawl budget is a mess, your entity clarity is compromised. 2. **Entity Clarity:** This is the non-negotiable foundation. If an AI cannot unambiguously identify, categorize, and understand your core entities—your business, your products, your services, your people—then you simply do not exist in the answer engine. Ambiguity is death. 3. **Answer-Ready Content:** This isn't blog post fluff. This is content engineered for synthesis. It’s structured, factual, and directly addresses user intent in a way that an AI can ingest, process, and output as a confident answer. It’s not about ranking; it’s about being the answer. 4. **AI Agent Visibility:** This is the ultimate prize. It’s about optimizing for the direct citation by generative AI models. It’s about ensuring your content is not just indexed, but understood and deemed authoritative enough to be surfaced as the primary source in a generative answer. Presence over position. Citation beats ranking. Always.

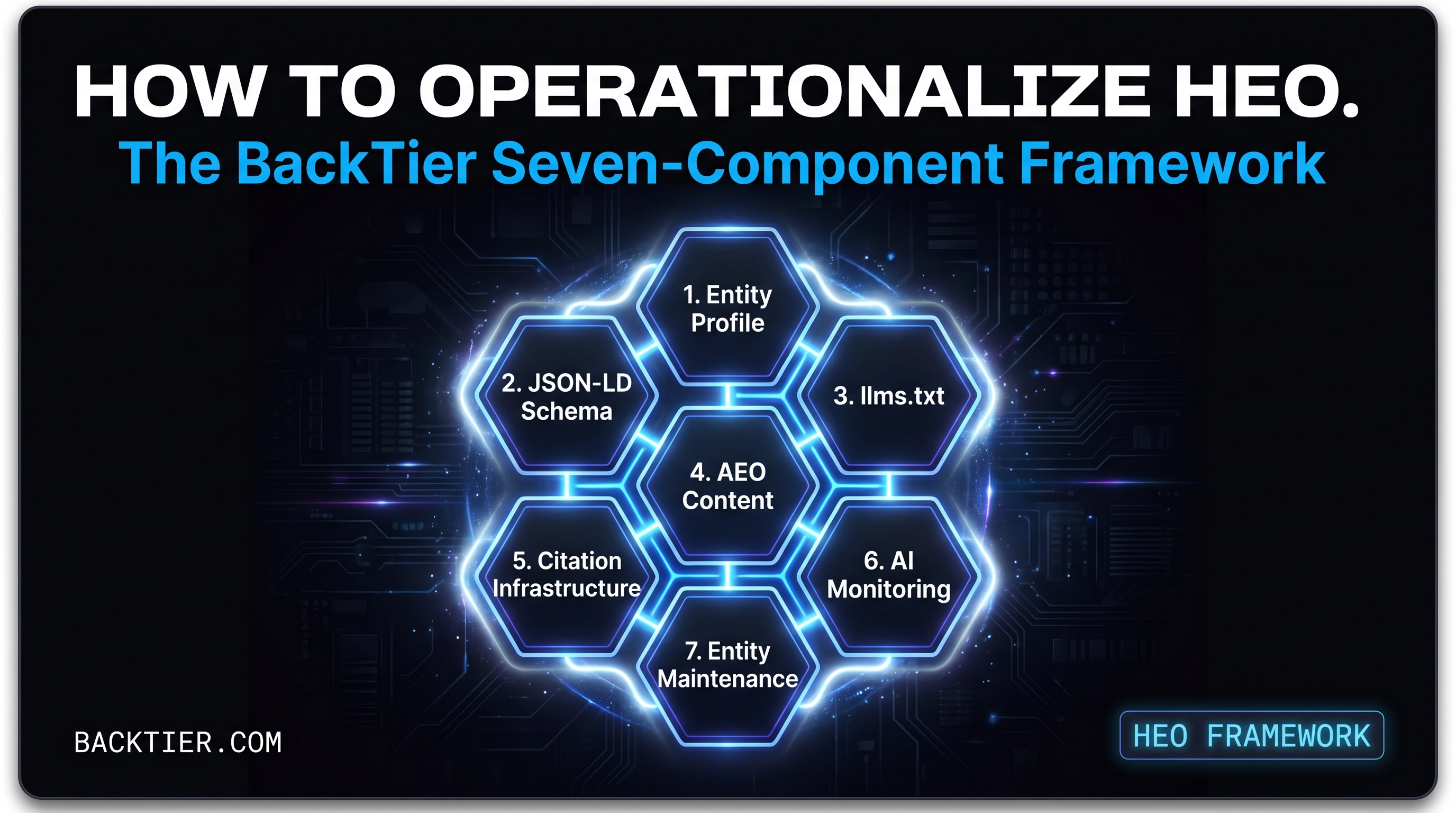

The BackTier Seven-Component Framework: An Operational Playbook

This is where the rubber meets the road. This is the BackTier framework, refined through years of brutal iteration and real-world application. Each component is critical. Fail on one, and the entire edifice crumbles. Most clients stumble on components 1, 3, and 5. They think they understand entities, but they don't. They ignore llms.txt until it's too late. And they underestimate the power of cross-surface citation. Don't be most clients.

### Component 1: Entity Profile Construction

This is where most clients fail, spectacularly. They confuse keywords with entities. They think a Google My Business profile is sufficient. It is not. Entity Profile Construction is the meticulous, forensic process of defining, disambiguating, and enriching every core entity associated with your brand. This isn't just about your business; it's about your founder, your key personnel, your unique products, your proprietary processes, your physical locations. Every single one is an entity that needs a robust, unambiguous digital identity.

**Operational Steps:**

* **Entity Identification:** Exhaustive audit of all brand-associated entities. What are you? Who are you? What do you do? What do you offer? This goes beyond your website. * **Attribute Mapping:** For each entity, map every conceivable attribute. Names, aliases, addresses, contact info, relationships to other entities, unique identifiers (DUNS, GLN, etc.). The more data, the clearer the entity. * **Canonicalization:** Establish the single, definitive source of truth for each entity. This is often a dedicated page on your website, but it can also be a Wikipedia entry, a Wikidata item, or a robust industry profile. Consistency is paramount. * **Disambiguation:** Actively work to differentiate your entities from similarly named or described entities. This is crucial for AI understanding. If you have a common business name, you need to work harder here.

**Abstract Dashboard Example: Entity Clarity Scorecard**

Imagine a dashboard displaying an 'Entity Clarity Score' for each of your core entities. This score is derived from the completeness of attributes, the consistency of information across known sources, and the uniqueness of the entity within its competitive landscape. Red flags indicate ambiguity, prompting immediate remediation. This isn't theoretical; it's a living, breathing metric that directly impacts AI visibility.

### Component 2: JSON-LD Schema Architecture

This is the language AI understands natively. JSON-LD isn't an SEO trick; it's the blueprint for your entities. It’s how you explicitly tell search engines and AI agents who you are, what you do, and how you relate to the world. Without a robust, interconnected JSON-LD schema, your entities are whispers in a hurricane. With it, they are declarative statements of fact.

**Operational Steps:**

* **Root Entity Definition:** Start with your primary `Organization` or `Person` schema. This is your brand's central identity. * **Nested Entity Integration:** Link all identified entities (products, services, locations, events, articles, reviews) to your root entity using appropriate schema types and properties. Build a knowledge graph, not a flat list. * **Relationship Mapping:** Explicitly define relationships between entities using properties like `owns`, `manages`, `employs`, `produces`, `mentions`. This creates a rich, interconnected web of information. * **Validation & Testing:** Use Google’s Rich Results Test and Schema.org validators. But don't stop there. Understand what the schema *means* to an AI, not just if it parses correctly. A technically valid schema can still be semantically weak.

**Abstract Dashboard Example: Schema Coverage & Interconnectivity Map**

A visual representation of your schema graph. Nodes represent entities, edges represent relationships. Color-coding indicates schema completeness and validation status. A high-level metric shows 'Schema Interconnectivity Density' – a measure of how well-linked your entities are. Low density means fragmented understanding for AI.

### Component 3: llms.txt Manifest Engineering

This is where most clients are utterly blind. They obsess over `robots.txt` for traditional crawlers, completely missing the emergent `llms.txt` protocol for AI agents. This isn't a suggestion; it's a directive. `llms.txt` is your explicit instruction set for generative AI models, telling them what content to prioritize, what to avoid, and crucially, what to cite. Ignore it, and you cede control of your narrative to the algorithms.

**Operational Steps:**

* **AI Crawler Identification:** Monitor logs for AI agent user-agents (e.g., ChatGPT-User, PerplexityBot, Gemini-Bot). Understand who is crawling you. * **Content Prioritization:** Use `Allow` and `Disallow` directives within `llms.txt` to guide AI agents to your most authoritative, answer-ready content. Point them to your canonical entity pages, your deep-dive research, your definitive FAQs. * **Citation Directives:** Explicitly instruct AI agents on preferred citation formats and sources. This is nascent but critical. As the protocol evolves, this will become your primary mechanism for ensuring proper attribution. * **Monitoring & Iteration:** AI agent behavior is dynamic. Your `llms.txt` needs constant monitoring and adjustment based on how AI systems are interpreting and citing your content.

**Abstract Dashboard Example: AI Citation Compliance & `llms.txt` Impact**

This dashboard tracks AI agent crawl activity against your `llms.txt` directives. It shows which pages are being accessed by AI, and more importantly, which of your content is being cited in generative answers. A 'Citation Compliance Score' indicates how well AI agents are adhering to your preferred sources, highlighting areas where `llms.txt` needs refinement. This is the ultimate feedback loop for AI visibility.

### Component 4: AEO Content Architecture

Answer Engine Optimization (AEO) content isn't about keywords; it's about answers. It's about structuring your content so that it directly, unambiguously, and comprehensively answers the questions an AI agent is likely to encounter. This requires a fundamental shift from traditional SEO content strategies. You're not writing for a human reader *first*; you're writing for an AI to synthesize, then for a human to consume the AI's output.

**Operational Steps:**

* **Question-Centric Research:** Identify the core questions your target audience asks, not just keywords. Use tools that reveal natural language queries and conversational patterns. * **Definitive Answer Formulation:** Craft content that provides a single, authoritative, and concise answer to each question. This often means leading with the answer, then providing supporting detail. * **Structured Data Integration:** Beyond JSON-LD, use HTML semantic elements (`<article>`, `<section>`, `<aside>`, `<dl>`, `<dt>`, `<dd>`) and clear headings (`<h1>` to `<h6>`) to delineate content sections and answers. Make it easy for an AI to parse. * **Contextual Breadcrumbs:** Ensure every piece of content is clearly contextualized within your broader entity graph. Link to related entities, define terms, and provide disambiguation where necessary.

**Abstract Dashboard Example: Answer Coverage & Confidence Score**

This dashboard maps identified user questions to your content, showing 'Answer Coverage' – how many relevant questions your content addresses. A 'Confidence Score' assesses the clarity and authoritativeness of your answers, potentially using NLP models to evaluate directness and factual accuracy. Gaps indicate content opportunities; low confidence indicates content requiring refinement for AI synthesis.

### Component 5: Cross-Surface Citation Infrastructure

This is another area where clients consistently fall short. They focus solely on their website. In the answer engine era, your website is just one node in a vast network. Cross-surface citation is about building a robust, consistent, and authoritative presence across *all* digital surfaces where your entities might be referenced. This includes social media, industry directories, review platforms, news sites, and even offline mentions. Every citation is a vote of confidence for your entity, and AI agents are listening everywhere.

**Operational Steps:**

* **Citation Audit:** Identify all existing mentions of your entities across the web. This is a manual and automated process. Where are you being talked about? * **Consistency Enforcement:** Ensure your entity attributes (name, address, phone, website, descriptions) are absolutely consistent across all identified surfaces. Discrepancies breed doubt for AI. * **Strategic Citation Building:** Actively pursue authoritative citations on relevant industry platforms, news outlets, and high-trust directories. This isn't link building; it's entity validation. * **Relationship Reinforcement:** Where possible, use schema.org markup on third-party platforms (if supported) or leverage platform-specific features to explicitly link back to your canonical entity pages.

**Abstract Dashboard Example: Entity Citation Velocity & Consistency**

This dashboard tracks the rate at which new citations for your entities are appearing across the web ('Citation Velocity'). It also monitors 'Citation Consistency' – flagging any discrepancies in entity attributes across different platforms. A high velocity of consistent citations signals strong entity authority to AI agents.

### Component 6: AI Citation Monitoring

Once you've built the infrastructure, you need to know if it's working. AI Citation Monitoring is the process of actively tracking how AI agents are citing your entities in their generative answers. This is the ultimate feedback loop for your HEO strategy. If you're not being cited, you're not winning. It's that simple.

**Operational Steps:**

* **Query Tracking:** Monitor a comprehensive set of natural language queries relevant to your entities and industry. How are users asking questions that your entities should answer? * **Generative Answer Analysis:** Analyze the generative answers provided by various AI systems (ChatGPT, Perplexity, Gemini, Google SGE). Are your entities being mentioned? Are they being cited as sources? * **Attribution Verification:** If cited, verify the accuracy of the attribution. Is the link correct? Is the context appropriate? Is your entity being presented as the definitive source? * **Feedback Loop Integration:** Use the insights from monitoring to refine your entity profiles, schema, `llms.txt` directives, and AEO content. This is an iterative process.

**Abstract Dashboard Example: AI Citation Share of Voice**

This dashboard displays your 'AI Citation Share of Voice' – the percentage of relevant generative answers where your entities are cited as a primary source. It breaks this down by AI platform and query cluster. A trend line shows your progress over time, and alerts flag instances where competitors are being cited for queries you should own.

### Component 7: Ongoing Entity Maintenance

HEO is not a set-it-and-forget-it strategy. The digital landscape, AI capabilities, and user behavior are constantly evolving. Ongoing Entity Maintenance is the continuous process of refining, updating, and expanding your entity graph to ensure sustained AI visibility. This is the long game. This is how you build enduring authority.

**Operational Steps:**

* **Regular Entity Audits:** Periodically re-audit your core entities. Have new products launched? Has personnel changed? Have new relationships formed? Your digital entity graph must reflect reality. * **Schema Evolution:** Stay abreast of Schema.org updates and emerging AI protocols. Your JSON-LD architecture needs to evolve with the ecosystem. * **Content Refresh & Expansion:** Continuously update and expand your AEO content to address new questions, provide deeper insights, and maintain factual accuracy. Stale content is invisible content. * **Competitive Intelligence:** Monitor competitor entity strategies. How are they defining themselves? Where are they building citations? Learn, adapt, and outmaneuver.

**Abstract Dashboard Example: Entity Health & Growth Index**

This dashboard provides an 'Entity Health Index' combining metrics from all other components: clarity, schema coverage, citation consistency, and AI citation share. It also tracks an 'Entity Growth Rate' – measuring the expansion and enrichment of your entity graph over time. This holistic view ensures you're not just maintaining, but actively growing your AI visibility.

Jason Wade's Commentary: Where Clients Get It Wrong

I've seen it countless times. Brilliant businesses, innovative products, but they trip over the basics of HEO. The biggest pitfalls? It's almost always a combination of **Component 1 (Entity Profile Construction)**, **Component 3 (llms.txt Manifest Engineering)**, and **Component 5 (Cross-Surface Citation Infrastructure)**.

Clients come to us, convinced they understand their entities. They'll show us a well-optimized website, maybe some decent local listings. But when we dig into the granular details of their entity profile – the unique identifiers, the explicit relationships, the disambiguation strategies – it's a wasteland. They haven't thought like an AI. They've thought like a human reading a brochure. An AI needs facts, structured data, and unambiguous definitions. If you can't clearly define your entity for a machine, how can you expect a machine to cite you as an authority?

Then there's `llms.txt`. It's the new `robots.txt`, but for the generative era. And almost no one is using it effectively, or even at all. They're letting AI agents roam free, scraping whatever they find, and then wondering why their brand narrative is getting twisted or why competitors are being cited for their expertise. `llms.txt` is your control panel. It's your opportunity to direct the AI, to tell it what matters, what's canonical, what's authoritative. Ignoring it is like leaving your front door wide open and hoping no one walks in and rearranges your furniture.

And finally, cross-surface citation. Everyone talks about backlinks. That's old news. We're talking about *entity citations* across the entire digital ecosystem. Clients are so focused on their own domain that they neglect the myriad other places their entities are mentioned – or *should* be mentioned. Every consistent, authoritative mention on a third-party platform strengthens your entity's credibility in the eyes of an AI. It's not just about a link; it's about a consistent, verifiable digital footprint that screams 'authority' to a machine. When an AI sees your entity consistently referenced across high-trust sources, it builds confidence. When it sees inconsistencies, it introduces doubt. And doubt, in the answer engine, is a death sentence.

These aren't minor oversights. These are fundamental failures in understanding how AI systems perceive and process information. HEO demands a paradigm shift. It demands precision, control, and a relentless focus on entity-centricity. If you're not getting these three components right, you're not doing HEO. You're just doing glorified SEO, and that's not enough anymore.

FAQ: Hybrid Engine Optimization in Practice

### What is the primary difference between HEO and traditional SEO?

HEO builds upon SEO by explicitly optimizing for AI agents and generative search engines, prioritizing citation and presence over traditional ranking. While SEO focuses on search engine algorithms for organic listings, HEO focuses on training AI models to cite your entities as authoritative sources in generative answers.

### Why is `llms.txt` crucial for HEO?

`llms.txt` serves as a manifest for large language models, providing explicit instructions on how AI agents should interact with and cite your content. It allows you to guide AI crawlers to your most authoritative information, ensuring proper attribution and preventing misinterpretation of your brand's narrative in generative AI outputs. Ignoring it means losing control over how AI perceives and presents your entities.

### How does BackTier define "presence over position"?

"Presence over position" signifies that in the answer engine era, being cited as the definitive source by an AI agent is more valuable than merely ranking high in traditional search results. It's about establishing your entity as the authoritative answer, directly influencing user perception and decision-making through AI-generated content, rather than relying solely on a click-through from a search listing.

### What is an "entity" in the context of HEO?

In HEO, an entity is any distinct, identifiable concept—a person, organization, product, service, location, or idea—that your brand is associated with. Unlike keywords, entities have attributes and relationships that can be explicitly defined and understood by AI systems. HEO focuses on building robust, unambiguous digital identities for these entities to enhance AI visibility and citation.

### How does HEO address the challenge of AI hallucination?

HEO directly combats AI hallucination by establishing clear, authoritative entity profiles, robust JSON-LD schema, and precise `llms.txt` directives. By providing AI agents with unambiguous, structured data and explicit instructions on canonical sources, HEO minimizes the likelihood of AI generating inaccurate or unverified information about your brand. It's about providing the AI with the truth, directly.

Tags

```json ["HEO Framework","AI Visibility","Entity Engineering","AEO","GEO","Jason Todd Wade","BackTier","LLMs.txt","JSON-LD","AI Citation","Generative AI","Search Engine Optimization","Digital Strategy","Operational Playbook","Enterprise SEO"] ```